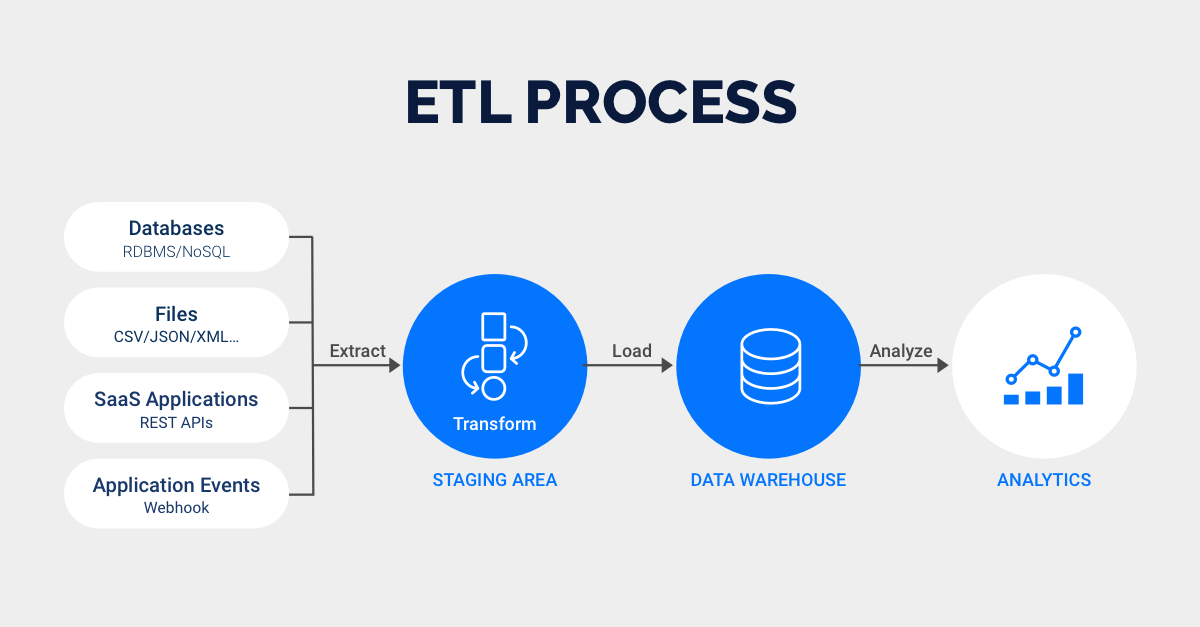

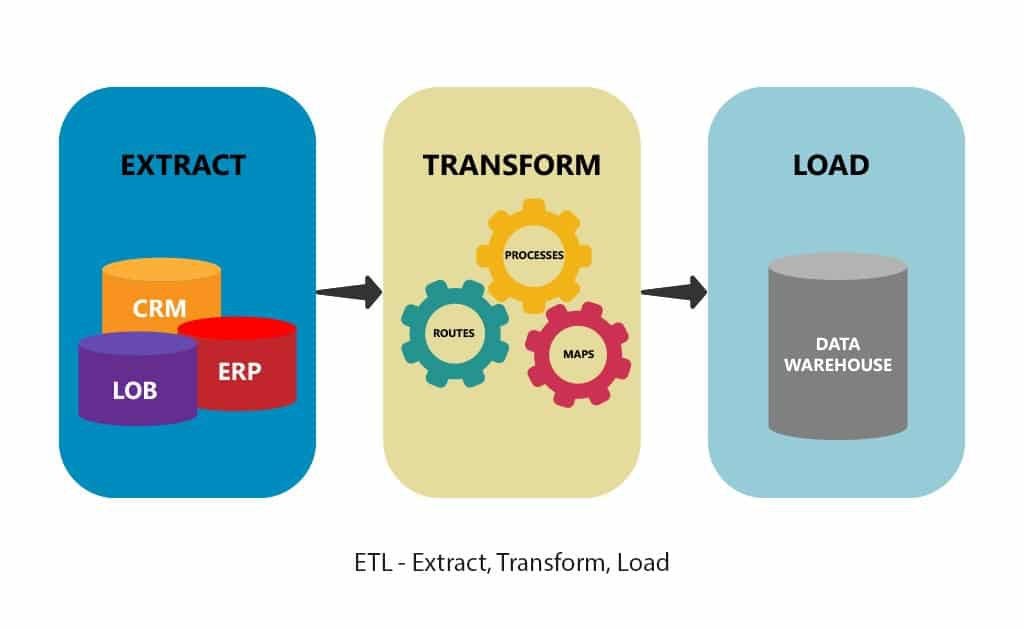

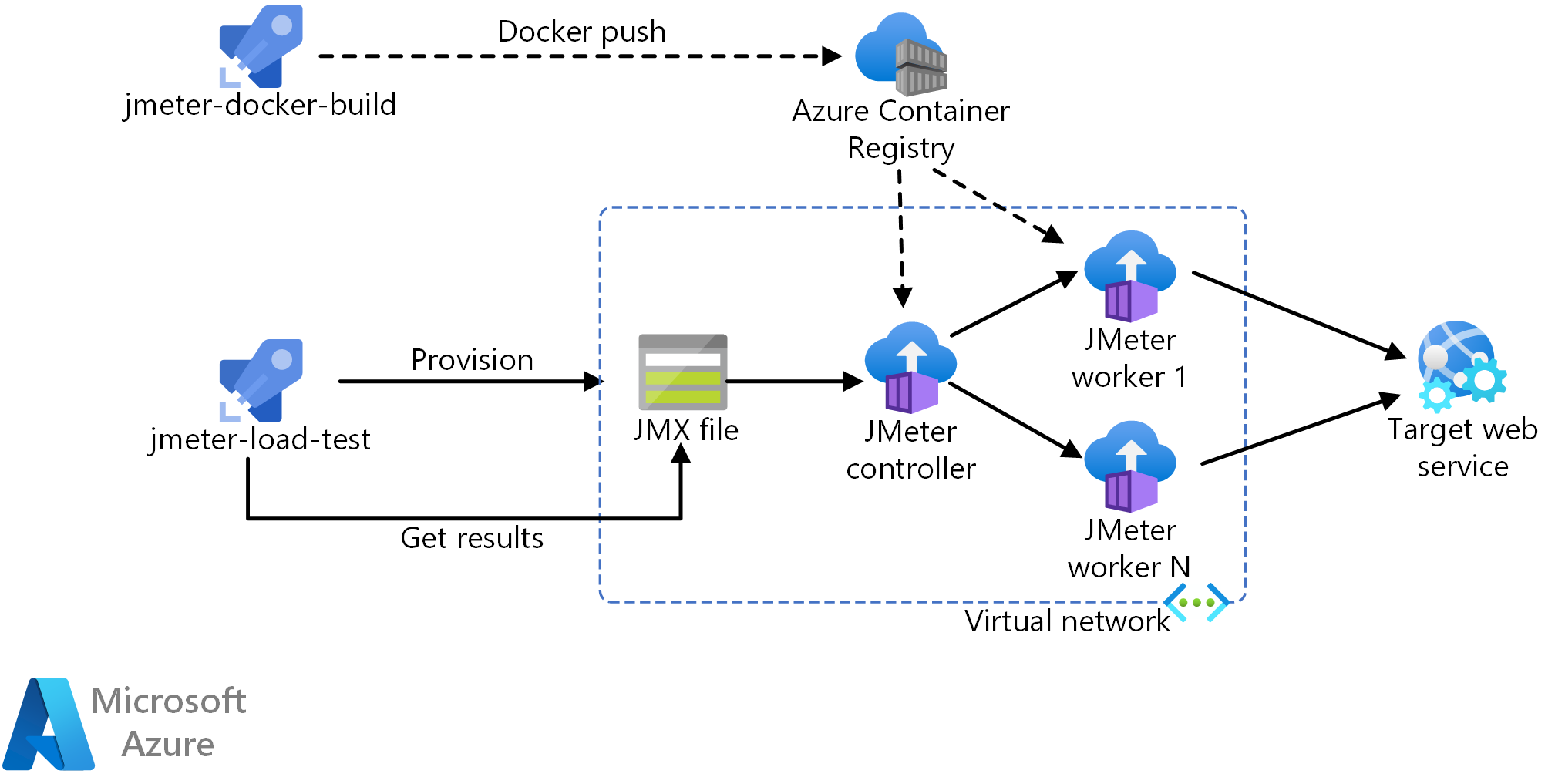

Extract data into Kafka: the Confluent JDBC connector pulls each row of the source table and writes it as a key/value pair into a Kafka topic (a feed where records are stored and published).To build a stream processing ETL pipeline with Kafka, you need to: The diagram below illustrates an ETL pipeline based on Kafka, described by Confluent: Many stream processing tools are available today - including Apache Samza, Apache Storm, and Apache Kafka. Thus, as client applications write data to the data source, you need to clean and transform it while it’s in transit to the target data store. In these cases, you cannot extract and transform data in large batches but instead, need to perform ETL on data streams. Modern data processes often include real-time data, such as web analytics data from a large e-commerce website. Building an ETL Pipeline with Stream Processing You must do this carefully to prevent the data warehouse from “exploding” due to disk space and performance limitations.Ģ. In other cases, the ETL workflow can add data without overwriting, including a timestamp to indicate it is new. Some data warehouses overwrite existing information whenever the ETL pipeline loads a new batch - this might happen daily, weekly, or monthly. Publish to your data warehouse: Load data to the target tables.At this point, you can also generate audit reports for regulatory compliance, or diagnose and repair data problems. Instead, data first enters a staging database which makes it easier to roll back if something goes wrong. Stage data: You do not typically load transformed data directly into the target data warehouse.

You need to program numerous functions to transform the data automatically. For example, if you want to analyze revenue, you can summarize the dollar amount of invoices into a daily or monthly total.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed